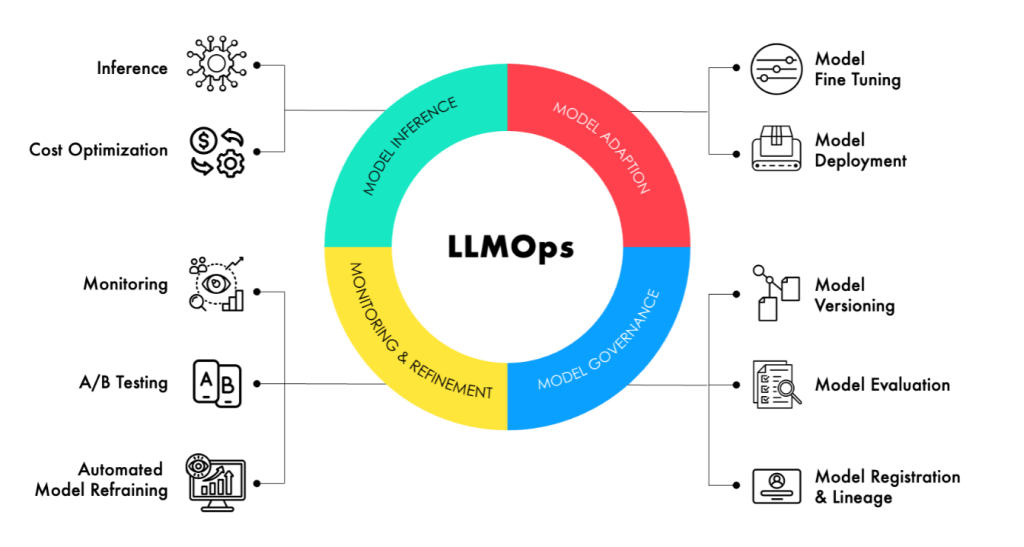

Building a generative AI model is an achievement. Keeping it running reliably, cost-efficiently, and with continuously improving quality in enterprise production is a discipline. LLMOps and Deployment Solutions are the practices and infrastructure that make production generative AI sustainable — and a world-class Generative AI Company is distinguished as much by its operational excellence as by its development capability.

The Operational Excellence Imperative

Organisations that have deployed generative AI to production are discovering that the operational demands are more intensive than traditional software. Models can degrade as data distributions shift. Inference costs can escalate as usage grows. Security vulnerabilities specific to language models require ongoing attention. And the pace of model improvement means that deployment decisions made today may need to be revisited as better options become available. LLMOps and Deployment Solutions address each of these imperatives systematically.

Model Monitoring and Quality Assurance

A Generative AI Company that delivers mature LLMOps and Deployment Solutions will instrument every production model with comprehensive monitoring: output quality metrics tracked over time, latency and throughput dashboards, cost per inference tracking, and anomaly detection that alerts when model behaviour deviates from baseline. This monitoring infrastructure is what enables proactive quality management rather than reactive firefighting.

Continuous Improvement Pipelines

The best LLMOps and Deployment Solutions include continuous improvement pipelines: systematic processes for collecting user feedback, evaluating model performance against business criteria, identifying improvement opportunities, executing model updates, and deploying improvements through validated release processes. A Generative AI Company that builds these pipelines as part of the initial deployment — rather than as an afterthought — delivers AI systems that improve continuously over time.

Cost Optimisation

LLMOps and Deployment Solutions must address inference cost as a first-class concern. A Generative AI Company with mature operational practice will implement intelligent cost optimisation: model caching, request batching, dynamic model routing, and GPU utilisation optimisation that collectively reduce inference cost per query significantly compared to naive serving approaches.

Conclusion

LLMOps and Deployment Solutions are the operational foundation that determines whether generative AI investments compound in value or degrade over time. A Generative AI Company that takes operational excellence as seriously as development excellence will deliver AI systems that earn stakeholder trust, improve continuously, and operate at a cost that supports sustainable scale.